Google Page Rank Check Tool - Free Online SEO Tool

|

Check Page Rank of your Web site pages instantly: |

Latest SEO News Updates

|

Check Page Rank of your Web site pages instantly: |

Search engine optimization (SEO) involves designing,writing, and coding a website in a way that helps to improve the volume and quality of traffic to your website from people using search engines.

While Search Engine Optimization is the focus of this booklet, keep in mind that it is one of many marketing techniques. A brief overview of other marketing techniques is provided at the end of this booklet.Effective SEO techniques help you get more potential customers to your website.

Websites that have higher rankings (i.e. presented higher in the search results) are identified to a larger number of people, who will then visit that site. Ensure that you define your market first before engaging in SEO techniques.

SEO involves a wide range of techniques, some of which you may be able to do yourself and others that will require web development expertise. Techniques include increasing links from other websites to your web pages, editing the content of the website, reorganizing the structure and organization of your website, and coding changes. It also involves addressing problems that might prevent search engines from fully “crawling” a website.

As was mentioned, one of the most critical ways to improve your website’s ranking in the search engine results pages is improving the number and quality of websites that link to your site.

Google PageRank is a system for ranking web pages used by the Google search engine. PageRank assesses the extent and quality of web pages that link to your web pages. Because Google is currently the most popular search engine worldwide, according to comScore Inc. (Press Release, June 2008), the ranking of your web pages received on Google PageRank will affect the number of visitors to your website. Techniques to improve your page rank are discussed below.

|

Check Page Rank of your Web site pages instantly: |

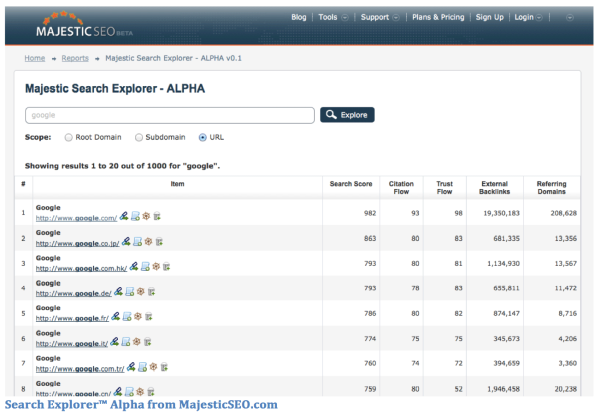

Majestic SEO, a popular link explorer tool for SEOs and webmasters, announced the launch of Search Explorer. Search Explorer is a search engine they designed to allow SEOs and webmasters to search by keyword or phrase, and the results would be ranked by Majestic SEO’s own linkage metrics. The purpose is to show which web sites rank the highest for a specific keyword based mostly on their own linkage data.

If us talking about Penguin 5 in reference to something Google is calling Penguin 2.1 hurts your head, believe us, it hurts ours, too. But you can pin that blame back on Google. Here’s why.

Google Inc. is an American multinational corporation specializing in Internet-related services and product. These embrace search, cloud computing, software, and on-line advertising technologies.

The corporation has been calculable to run over a meg servers in information centers round the world and to method over one billion search requests. Google-owned sites like YouTube and Blogger.

There are 4 major functionality is doing Google Search Process. They are

The journey of a question starts before you ever blood group search, with creep and assortment the online of trillions of documents.

Google Crawling

We use code referred to as “web crawlers” to find in public offered webpages. the foremost well-known crawler is termed “Google bot.” Crawlers investigate webpages and follow links on those pages, very similar to you\'d if you were browsing content on the online. they\'re going from link to link and convey knowledge concerning those webpages back to Google’s servers.

The crawl method begins with an inventory of internet addresses from past crawls and sitemaps provided by web site house owners. As our crawlers visit these websites, they appear for links for different pages to go to. The code pays special attention to new sites, changes to existing sites and dead links.

Computer programs verify which websites to crawl, how often, and the way several pages to fetch from every web site. Google does not settle for payment to crawl a web site a lot of ofttimes for our internet search results. we tend to care a lot of concerning having the simplest doable results as a result of within the long haul that’s what’s best for users and, therefore, our business.

Organizing webpages information by indexing

The web is like associate degree ever-growing library with billions of books and no central classification system. Google primarily gathers the pages throughout the crawl method and so creates associate degree index, thus we all know precisely a way to look things up. very similar to the index within the back of a book, the Google index includes data concerning words and their locations. after you search, at the foremost basic level, our algorithms search your search terms within the index to search out the acceptable pages.

The search method gets way more complicated from there. after you rummage around for “dogs” you don’t need a page with the word “dogs” thereon many times. you almost certainly need photos, videos or an inventory of breeds. Google’s assortment systems note many alternative aspects of pages, like after they were revealed, whether or not they contain photos and videos, and far a lot of. With the information Graph, we’re continued to travel on the far side keyword matching to higher perceive the individuals, places and stuff you care concerning.

Google’s Matt Cutts proclaimed late on Friday that Penguin seabird two.1 was launching, touching roughly a hundred and twenty fifth of searches “to an apparent degree.” this can be the primary official Penguin seabird announcement we’ve seen since Google discovered its initial Penguin 2.0 in May. Penguin 2.0 was the largest tweak to Penguin since the update at first launched in April of last year, that was why it had been known as 2.0 despite the update obtaining many refreshes in between. Cutts same this regarding Penguin 2.0 back once it rolled out: “So this one could be a very little a lot of comprehensive than Penguin 1.0, and that we expect it to travel a bit bit deeper, and have a bit bit a lot of of a bearing than the first version of Penguin.” Penguin 2.0 was same to have an effect on two.3% of queries with previous information refreshes solely impacting zero.1% and 0.3%. The initial Penguin update affected three.1%. whereas this latest version (2.1) might not be as huge as two.0 or the first, the half of queries affected still represents a considerably larger question set than the opposite past minor refreshes. Hat tip to Danny Sullivan for the numbers. the oldsters over at computer programme Land, by the way, are keeping an inventory of version numbers for these updates, that differs from Google’s actual numbers, thus if you’ve been going by those, Danny kinds out the confusion for you. Penguin, of course, is intended to attack webspam. Here’s what Google same regarding it within the initial launch: The modification can decrease rankings for sites that we have a tendency to believe square measure violating Google’s existing quality tips. We’ve perpetually targeted webspam in our rankings, and this rule represents another improvement in our efforts to scale back webspam and promote prime quality content. whereas we have a tendency to can’t impart specific signals as a result of we have a tendency to don’t need to allow folks some way to game our search results and worsen the expertise for users, our recommendation for webmasters is to target making prime quality sites that make an honest user expertise and use white hat SEO ways rather than participating in aggressive webspam ways.

|

| I am Chenthil Vel Murugan, working as a Sr. SEO for the past 7 years. I have the capability of optimizing any website and rank in major search engines like Google, Yahoo, Bing. If you have any help in SEO and Development please contact to me Webmaster Contact |